To Augment or Not to Augment? Diagnosing Distributional Symmetry Breaking

Symmetry-aware methods for machine learning, such as data augmentation and equivariant architectures, encourage correct model behavior on all transformations (e.g. rotations or permutations) of the original dataset. These methods can improve generalization and sample efficiency, under the assumption that the transformed datapoints are highly probable, or 'important', under the test distribution. In this work, we develop a method for critically evaluating this assumption. In particular, we propose a metric to quantify the amount of symmetry breaking in a dataset, via a two-sample classifier test that distinguishes between the original dataset and its randomly augmented equivalent. We validate our metric on synthetic datasets, and then use it to uncover surprisingly high degrees of symmetry-breaking in several benchmark point cloud datasets, constituting a severe form of dataset bias. We show theoretically that distributional symmetry-breaking can prevent invariant methods from performing optimally even when the underlying labels are truly invariant, for invariant ridge regression in the infinite feature limit. Empirically, the implication for symmetry-aware methods is dataset-dependent: equivariant methods still impart benefits on some symmetry-biased datasets, but not others, particularly when the symmetry bias is predictive of the labels. Overall, these findings suggest that understanding equivariance — both when it works, and why — may require rethinking symmetry biases in the data.

* Equal contribution. Blog post written by Elyssa Hofgard.

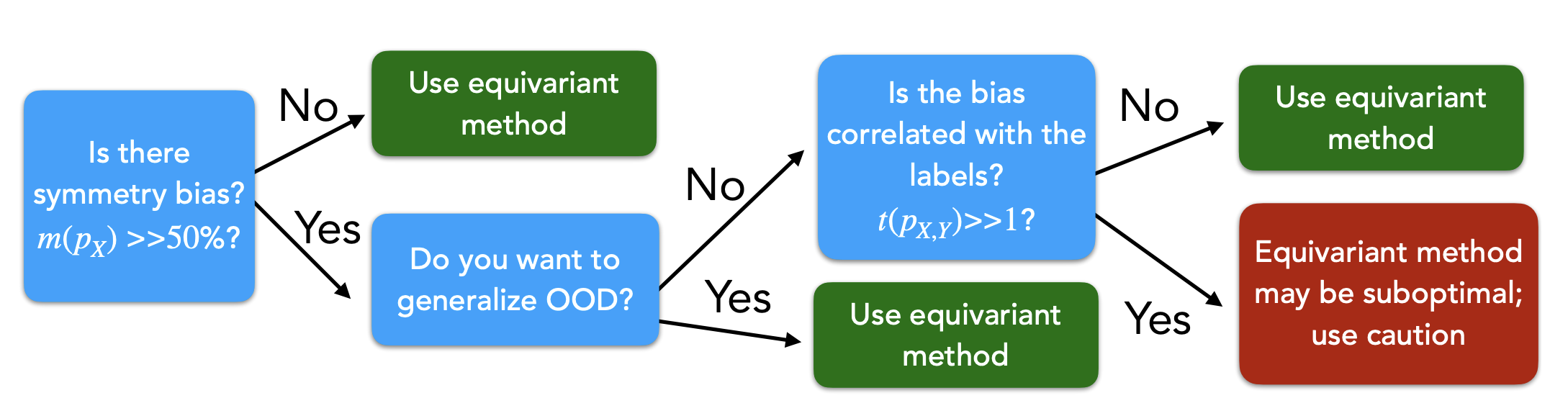

TL;DR: Many popular ML datasets are heavily “canonicalized” — objects almost always appear in the same orientation. We build a simple classifier test to measure this, show theoretically that it can cause data augmentation to hurt performance, and give practitioners a flowchart for diagnosing their own datasets.

Introduction

For a group transformation \(g \in G\) (e.g. a rotation or permutation), a model is equivariant if \(f(gx) = g f(x)\) — rotating the input rotates the output — and invariant if \(f(gx) = f(x)\) — the output is unchanged. Equivariant architectures enforce this by design; data augmentation encourages it by randomly applying \(g\) to training inputs. Equivariant models have had successes in multiple domanains - materials science

Both approaches rely on the assumption that the ground truth function \(f\) is equivariant. However, there is often an implicit assumption that the data distribution itself is symmetric, i.e. \(p(x) \approx p(gx)\). We refer to violations of this assumption as distributional symmetry breaking. In this work, we study distributional symmetry breaking. Our main contributions are:

-

A classifier-based diagnostic: We introduce a simple two-sample test to measure distributional symmetry breaking.

-

Theoretical analysis: We show that data augmentation can harm performance under certain distributional conditions.

-

Empirical studies: We demonstrate that widely used 3D datasets are strongly canonicalized. We correspondingly evaluate the impacts of equivariant methods on datasets across domains and propose hypotheses for differing behaviors.

Distributional Symmetry Breaking

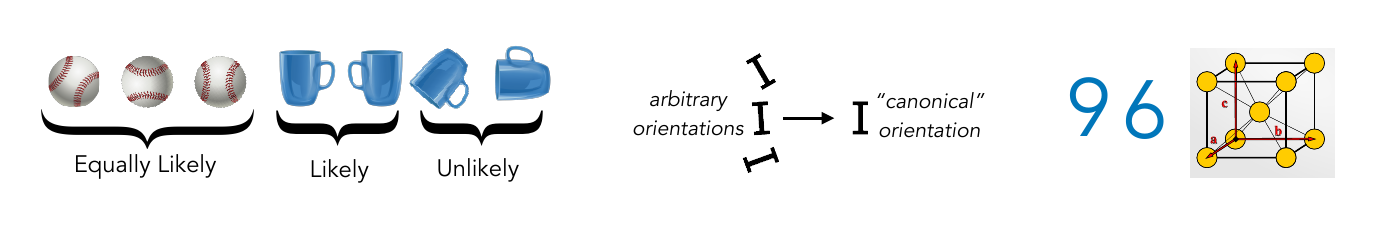

Distributional symmetry breaking may lead equivariant methods or data augmentation to discard useful information. For example, classifying 6s and 9s in MNIST is easy when the digits appear in their natural orientation, but it becomes harder under rotational augmentation.

Proposed Metric

The goal: define a metric \(m(p_X)\) that quantifies how far a data distribution \(p_X\) is from being group-symmetric — without assuming symmetry in the first place.

The key reference is the symmetrized density \(\bar{p}_X(x) := \int_{g \in G} p_X(gx)\, dg\), the closest group-invariant distribution to \(p_X\). Measuring \(m(p_X)\) reduces to measuring how distinguishable \(p_X\) and \(\bar{p}_X\) are from finite samples.

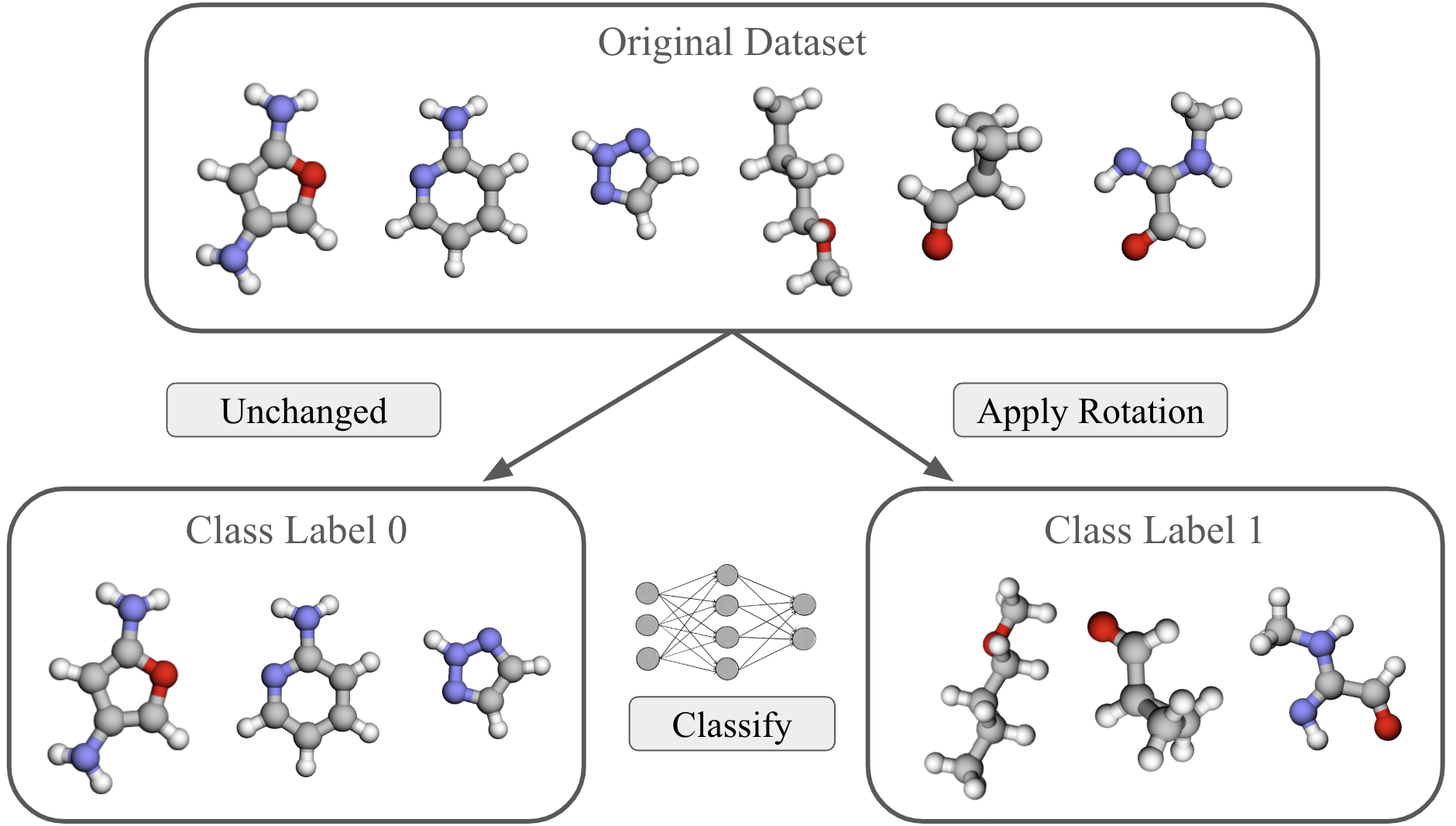

Our approach: a two-sample classifier test. We train a small neural network to distinguish samples from \(p_X\) (original) versus \(\bar{p}_X\) (randomly transformed), and use test accuracy as the metric:

\[m(p_X) := \mathbb{E}_{(x,c) \in D^*_{\text{test}}} \left[ \mathbf{1}\big(\text{NN}(x) = c\big) \right]\]Algorithm:

- Split the dataset in half.

- Apply random \(g \sim G\) to one half — these approximate \(\bar{p}_X\) (label 1).

- Keep the other half unchanged as \(p_X\) (label 0).

- Train a binary classifier and report test accuracy as \(m(p_X)\).

Interpretation:

- \(m(p_X) \approx 0.5\): data is (approximately) group-invariant — the classifier can’t do better than chance.

- \(m(p_X) \approx 1\): data is strongly canonicalized — the classifier easily detects the preferred orientations.

Task-Dependent Metric

\(m(p_X)\) tells us whether the data breaks symmetry — but not whether that matters for the specific task. Consider MNIST digits 6 and 9: they are canonicalized in a way that is directly predictive of the label. Augmenting away orientation destroys this useful signal.

We introduce \(t(p_{X,Y})\), a metric of task-useful distributional symmetry breaking. Let \(c\colon\mathcal{X} \rightarrow G\) be a canonicalization function (implemented as a randomly initialized, untrained equivariant network). Since data augmentation destroys information in \(c(x)\), we ask: how much does \(c(x)\) predict the label \(f(x)\)?

We compare:

- \(\mathcal{L}(c(x) \to f(x))\): loss when predicting labels from orientations (canonical orientation intact)

- \(\mathcal{L}_{\text{rot}} = \mathcal{L}(c(gx) \to f(gx),\, g \sim G)\): same, but with random rotations applied — removing orientation information

- \(t \gg 1\): orientations carry task-relevant signal — augmentation likely hurts by discarding it.

- \(t \approx 1\): orientation is not predictive — augmentation is likely safe or beneficial.

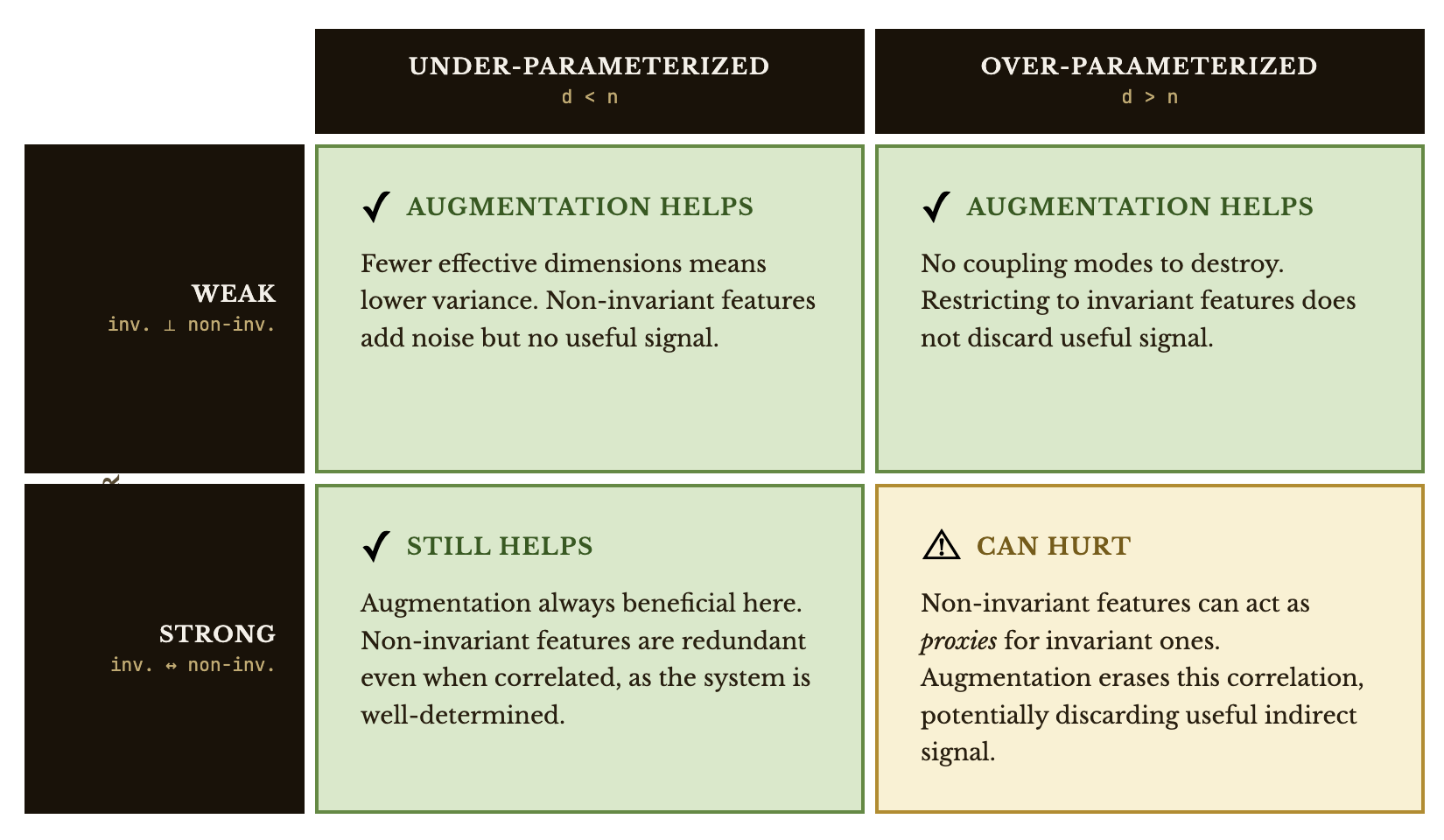

Theory

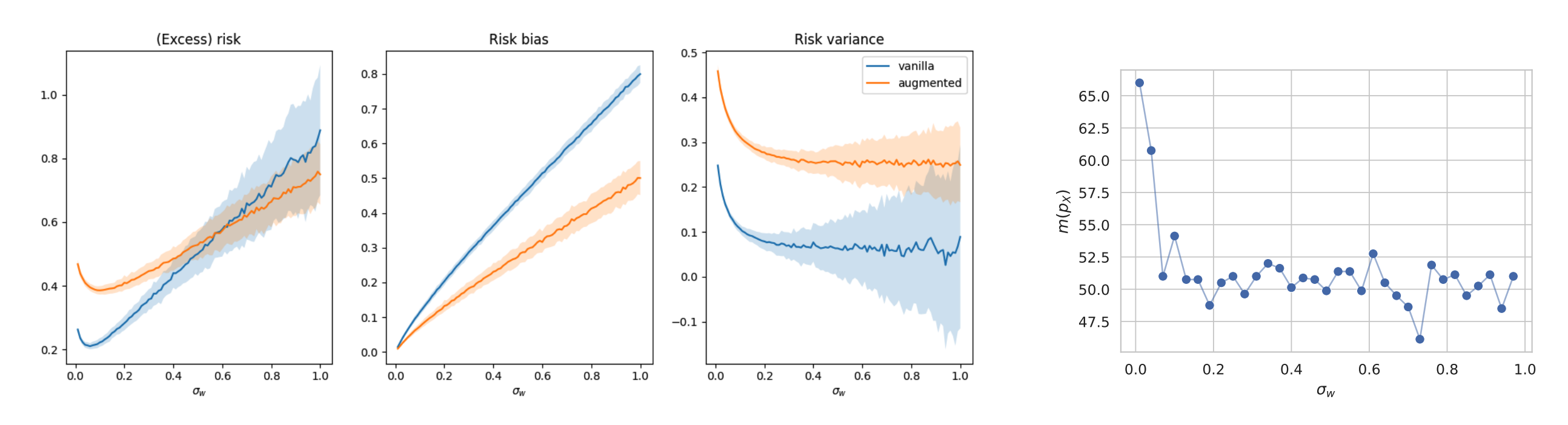

To understand when augmentation can backfire, we analyze a tractable setting: high-dimensional ridge regression where the true function is invariant, but the data distribution may not be. Our theory shows that even when the ground-truth function is invariant, data augmentation and test-time symmetrization can be harmful when invariant and non-invariant features are strongly correlated.

Takeaway: Symmetry enforcement can hurt by discarding signal that, while technically non-invariant, is informative about the label via its correlation with invariant features.

We thus find invariance interacts with data geometry and high-dimensional statistics in subtle ways. When the data distribution breaks symmetry — even slightly — enforcing invariance can destroy useful signal. And in modern over-parameterized regimes, reducing effective dimension can itself introduce instability.

This raises a puzzle: if datasets like QM9 are strongly canonicalized, our theory predicts augmentation should hurt — yet empirically it helps. We investigate this discrepancy below.

Experiments

How canonicalized are real datasets?

We measure \(m(p_X)\) on multiple benchmark datasets. Strikingly, many widely-used benchmarks are strongly canonicalized — particularly molecular and materials science datasets — even though equivariant methods are routinely applied to them under the assumption of distributional symmetry.

Takeaway: Real-world datasets often deviate substantially from the symmetry assumptions built into equivariant models.

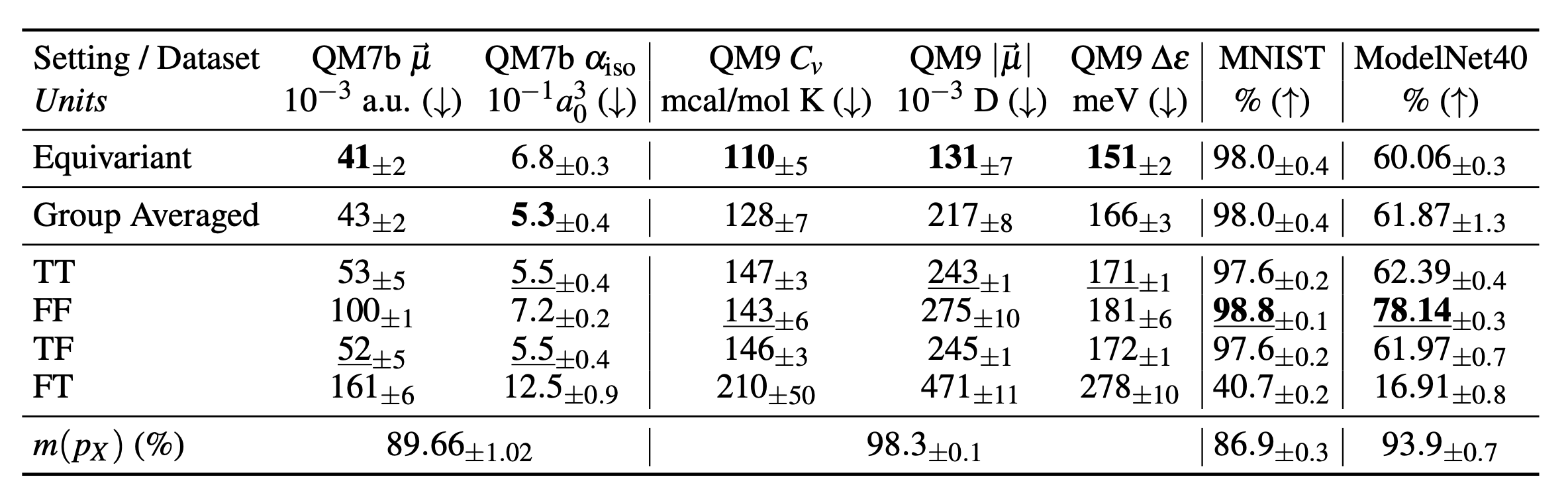

Does canonicalization predict whether augmentation helps?

We evaluate equivariant, group-averaged, and stochastic group-averaged models on each dataset. The results are summarized below.

| Dataset | Symmetry | \(m(p_X)\) | Effect of Augmentation / Equivariance |

|---|---|---|---|

| MNIST | \(C_4\) rotations | High | Minimal benefit; slight harm |

| ModelNet40 | SO(3) rotations | High (class-specific) | Reduces performance |

| QM9 | SO(3) rotations | High (CORINA preprocessing | Improves nearly all properties |

| QM7b | SO(3) rotations | High | Beneficial, especially for non-scalar properties (e.g. dipole) |

While one might expect augmentation to consistently hurt on canonicalized datasets, molecular datasets (QM7b and QM9) defy this picture.

Takeaway: Whether symmetry methods help or hurt is dataset-dependent — canonicalization alone does not predict the outcome.

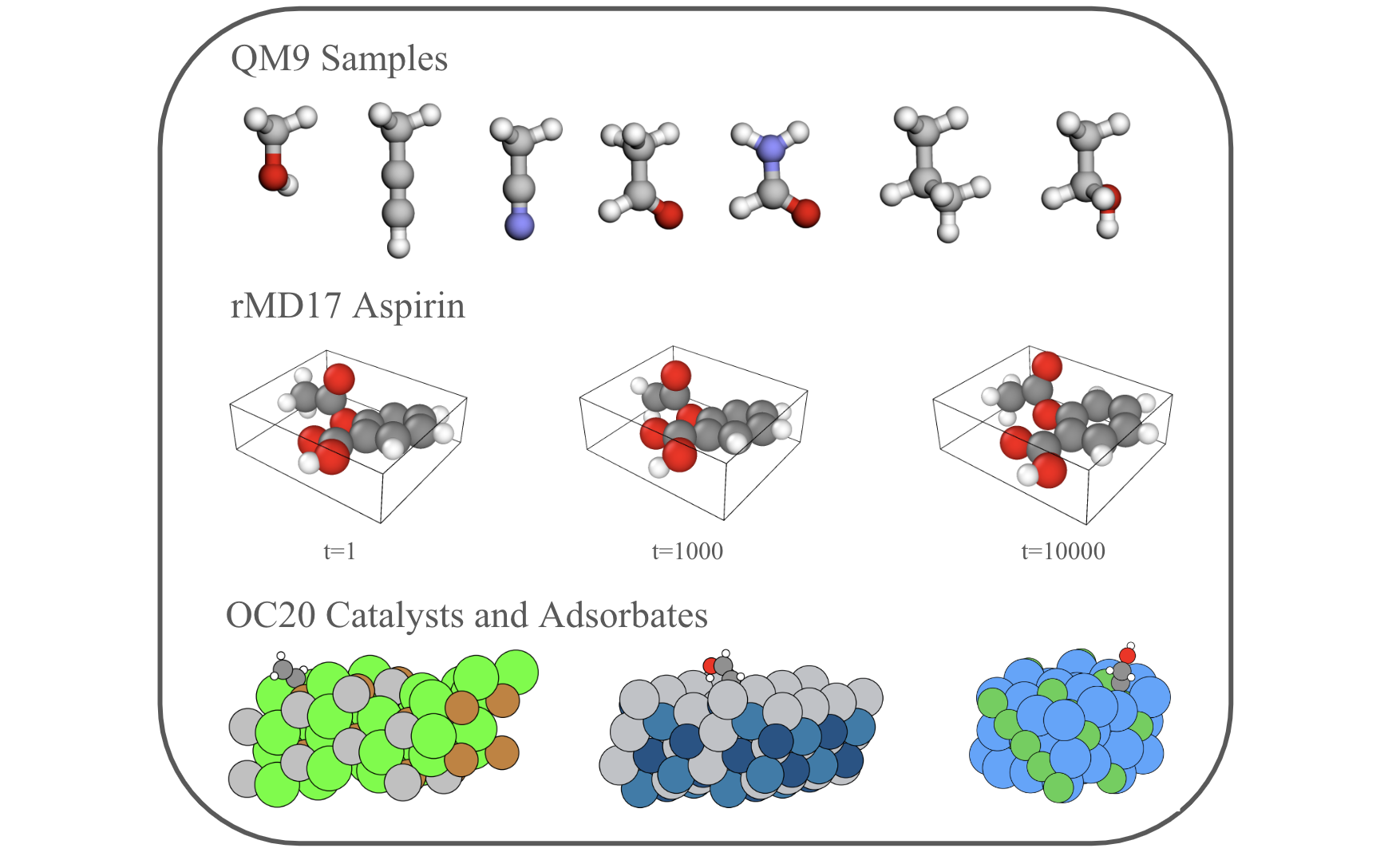

Additional Materials Science Datasets

We extend the analysis to additional materials science datasets:

- rMD17

: Molecular dynamics trajectories for small molecules. The degree of distributional symmetry breaking varies widely between molecules — reflecting differences in initial conditions and molecular geometry. - OC20

: Adsorbates on periodic crystalline catalysts. Both the adsorbate alone and the adsorbate+catalyst system are highly canonicalized, likely due to the catalyst surface being aligned with the \(xy\) plane. - LLM crystal dataset

: Crystals serialized to text for LLM generation. Atoms must be listed in some order — the authors independently noted that permutation augmentations hurt generative performance. We trained a classifier head on a pretrained DistilBERT transformer to test this, and found \(m(p_X) = 95\%\) — strong permutation canonicalization due to conventions in atom ordering.

Takeaway: Distributional symmetry breaking is widespread across data types and modalities — not just 3D point clouds.

Hypotheses for Empirical Behavior

We present hypotheses for the differing performance of equivariant models/data augmentation across datasets.

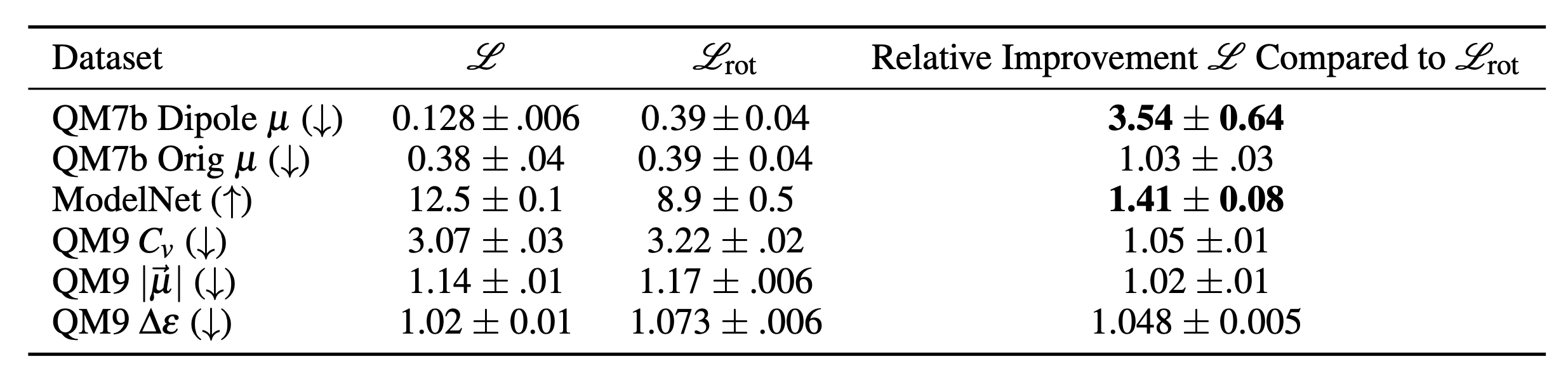

Task Dependent Metric

We apply \(t(p_{X,Y})\) to each dataset to ask: does the canonical orientation actually carry task-relevant information?

-

QM7b dipole (artificial canonicalization): Molecules are aligned so their dipole moments point along the \(z\) axis — orientation is directly predictive of the label. A non-equivariant model can exploit this; an equivariant one cannot. As expected: no-augmentation (FF) outperforms the equivariant baseline, and \(t \gg 1\).

-

ModelNet40: Same story — equivariance hurts and \(t\) is large. Object identity is correlated with how the object is canonically oriented.

-

QM9: \(t \approx 1\) — canonical orientation carries little task-relevant information globally. Equivariance does destroy orientation, but since that orientation wasn’t predictive to begin with, nothing useful is lost. This is consistent with equivariance helping.

Takeaway: \(t\) is large for datasets where equivariance hurts, and small where it helps — it tells us whether augmentation discards task-relevant signal.

But \(t\) only explains why equivariance doesn’t hurt on QM9 — not why it actively improves performance. For that, we need to look at local structure.

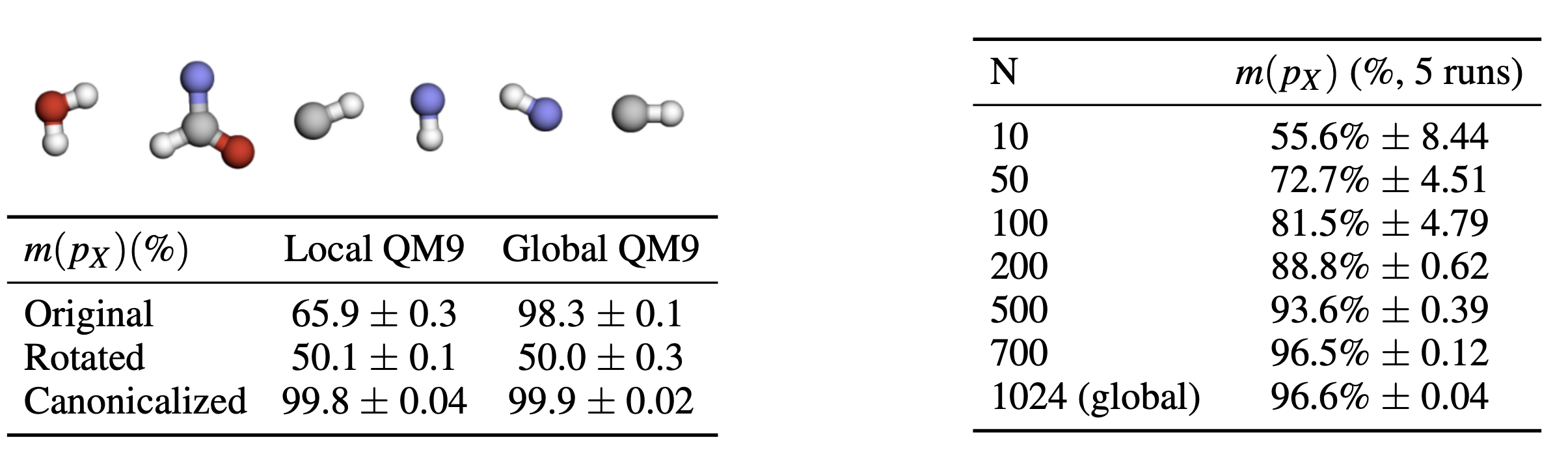

Locality

Equivariant models compute locally equivariant features over receptive fields

- QM9: Local bond neighborhoods have much lower \(m(p_X)\) than the full molecule — local structure is close to isotropic even when the global dataset is canonicalized. Equivariant models can exploit this local symmetry.

- ModelNet40: Local neighborhoods also show lower \(m(p_X)\) at small sizes, but canonical alignment re-emerges as neighborhoods grow — the canonical orientation is object-level and tightly coupled to the task.

Based on these findings, we provide the following flowchart as a practical guide for deciding whether to use equivariant methods or data augmentation on a new dataset:

Conclusion

We provide interpretable metrics for diagnosing distributional symmetry breaking — no domain knowledge required. Every benchmark dataset we tested showed a high degree of symmetry-breaking, yet augmentation only hurt performance on ModelNet40.

Three implications stand out. First, non-equivariant models evaluated only on in-distribution data may appear accurate but fail under transformations — assessing whether this matters requires domain expertise (see the flowchart above). Second, data augmentation is often treated as universally beneficial for invariant tasks, but we show it can hurt. Third, if already-canonicalized molecular datasets still benefit from equivariance, equivariant models must provide some additional, possibly domain-specific benefit beyond global symmetry enforcement — a compelling open question.